Google’s Gemma 4 is an open model, specifically designed to bridge the gap between lightweight efficiency and advanced “agentic” reasoning.

Search engine giant Google has recently launched the latest evolution of its family of open models with Gemma 4. Built on the research foundations of Gemini 3, Google’s Gemini 4 is specifically designed to bridge the gap between lightweight efficiency and advanced “agentic” reasoning. Claimed as the most intelligent open models to date, Gemma 4 introduces several significant upgrades for developers and researchers.

Gemma 4: Core Model Capabilities

With Google’s official launch of Gemma 4, the landscape of open-source artificial intelligence has shifted once again. Here are some core capabilities of Gemma 4:

- Multimodal Native Support: Unlike earlier versions, Gemma 4’s native multimodal capability supports image, text, and audio inputs. It also generates high-quality text output across these diverse data types.

- Advanced Reasoning: Gemma 4 model is purpose-built for agentic workflows, providing improved logic for complex tasks, multi-step problem-solving, and tool use.

- Agentic Skills: Gemma 4 is optimally suited for creating autonomous AI agents, showcasing its powerful agentic skills. These include strong support for function calling, generating structured outputs such as JSON, and advanced code generation capabilities.

- Diverse Architectures: Gemma 4 is available in both Dense and Mixture-of-Experts (MoE) architectures, allowing users to choose between consistent performance or higher efficiency

Model Size and Deployment

The Gemma 4 model is designed to run everywhere from massive data centers to local mobile devices:

| Model Size | Primary Use Case |

| 2B & 4B | Optimized for on-device AI on Android and edge devices. |

| 31B | High-performance reasoning ranks highly on global AI leaderboards. |

Developer Ecosystem

- Local Intelligence: Gemma 4 is optimized for seamless local execution through platforms like Android Studio and LM Studio. Local Intelligence empowers developers to create AI applications that function independently of a cloud connection.

- Open Access: Released under the Apache 2.0 license, Gemma 4 is accessible on several major platforms, including Hugging Face, Google AI Studio, and Google Cloud.

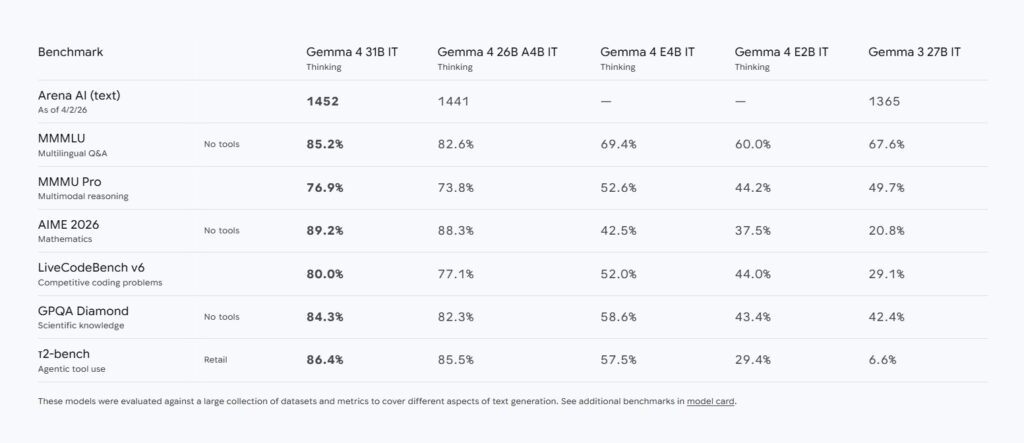

- Performance: The 31B model’s current top ranking on the Arena AI text leaderboard demonstrates Gemma 4’s strong performance and capability to rival much larger, proprietary systems, confirming the competitiveness of open models.

Google’s Gemma 4 serves as the foundation for the next generation of Gemini Nano. This upgrade integrates advanced agentic capabilities directly to the edge for better privacy and lower latency.

Must Read: AI Investment Hits a Fever Pitch in 2026

Gemma 4 Vs Gemini

Gemma 4 and Gemini, both stemming from core Google DeepMind research. Both models are designed for different purposes. While Gemma is an open-source family designed for local development, Gemini is a closed-source, proprietary system built for high-scale cloud applications.

Key Differences at a Glance

| Feature | Gemma 4 | Gemini (1.5 / 2.0) |

| Access | Open Source (Apache 2.0 License) | Proprietary API / Chat (Closed) |

| Deployment | Local/Edge (Phones, Laptops, Raspberry Pi) | Cloud-only (Google AI Studio, Vertex AI) |

| Model Sizes | Compact (E2B, E4B) to Mid-size (26B, 31B) | Massive (Pro, Ultra) to Small (Nano) |

| Context Window | Up to 256K tokens | Up to 1M – 2M+ tokens |

| Specialization | Efficiency, Agentic workflows, Privacy | Maximum performance, Large-scale data |

Also Read: ChatGPT vs Gemini: Everything Revealed

Which is Best to Use?

Both Gemma 4 and Gemini models have moved beyond simple text. However, they serve distinct purposes:

| Recommended Model | Scenario | Details |

| Gemma 4 | Privacy (data remains on-device). | Ideal for cost savings on API usage and applications requiring offline mobile functionality. |

| Gemini | Need the absolute highest reasoning quality. | Best suited for handling massive datasets (over 1M context tokens) or when managed hardware infrastructure is preferred. |

Gemma 4 marks a milestone where open models are no longer just “lite” versions of their proprietary cousins. By focusing on agentic reasoning, multimodal native support, and local efficiency, Google has provided the global developer community with a versatile toolkit for the future of AI. Whether it is powering a local assistant on a smartphone or driving complex data analysis in the cloud, Gemma 4 is set to become the backbone of the next generation of intelligent, autonomous applications.

Google’s Gemma 4 represents a significant leap forward, positioning open models not merely as diluted versions of their proprietary counterparts, but as fully capable, cutting-edge tools. By equipping developers with the tools to build autonomous, reasoning-capable systems that can run on anything from a smartphone to a massive data center, Google is democratizing the next frontier of digital intelligence.

Stay tuned to The Future Talk for more such interesting insights. Comment your thoughts and join the conversation.